Data Automation Guide

Understand data automation, helpful tools, and use cases.

Understand data automation, helpful tools, and use cases.

Data automation is the use of technology to handle tasks like data collection, transformation, and analysis. Automation reduces the manual work involved in data management, and is especially beneficial for maintaining data quality and accuracy at scale.

ETL, quality assurance checks, system backups, and fraud detection all fall under the umbrella of data automation.

A single platform to collect, unify, and connect your customer data.

Data automation does depend on some upfront, manual work – that is, defining the rules, logic, and workflows that will dictate how data tasks are automated.

For example, automating data collection allows businesses to process millions of events from numerous sources. But should you be collecting everything? Probably not. For an e-commerce company, they would likely be concerned with events like “product_viewed” or “checkout_started.” For B2B SaaS, they might be more interested in “account_created” or “trial_started.” What data to track is up to internal teams to define, along with the appropriate naming conventions for each event. Automation can help ensure that companies stick to those predefined standards, but human input is needed when it comes to setup.

Along with what data to track, companies also need to think through how they’re staying compliant with privacy laws like the GDPR or CCPA. For instance, automation can come in handy by instantly masking data that would constitute as personally identifiable information.

In short, here’s how data automation works:

First identify the specific tasks and processes you plan to automate, and the benefits of doing so. Be sure to identify key stakeholders to understand the needs and expectations at play.

Identify all your data sources and the systems involved in internal workflows (e.g., where is data currently being stored, how it’s being integrated, what types of data are being collected).

Choose the right tools and platforms for your needs (e.g., ETL tools, journey orchestration, scripting languages). Be sure to consider things like scalability, ease of use, and compatibility with existing systems, along with how data will be transformed into a unified, standardized, and usable format.

Use flowcharts, diagrams, or workflow visualization tools to map out and design automation processes (e.g., the sequence of tasks, dependencies, event triggers).

Implement data quality checks to flag incorrect or inconsistent data.

Set up archiving and retention policies to manage historical data.

Test automated processes in a controlled environment before deploying them in production.

Provide documentation for troubleshooting, maintenance, and onboarding new team members.

Iterate on the automation setup based on feedback, changing requirements, or the discovery of opportunities for improvement.

Data automation simplifies what would otherwise be an insurmountable set of tasks. Its ability to handle an ever-growing volume of data, in terms of collection, transformation, and analysis is imperative in today’s fast-paced, digital age. In short, data automation is important because it:

Streamlines time-consuming and repetitive tasks, which would be difficult (or near impossible) to perform manually while ensuring data quality.

Empowers organizations to scale, and manage evolving tech stacks (e.g., bypassing the need to manually build integrations, enabling real-time data processing).

Helps maintain compliance with privacy regulations to ensure data remains safe and secure (e.g. complying with data subject rights via automated deletion and user suppression across Segment and your connected tech stack).

Helps organizations remain adaptable and agile amid fast-changing circumstances.

Data automation offers many opportunities to modernize and scale, but these can come with challenges. Here's how Segment solves the most frustrating challenges that come with building a data automation solution.

Segment’s CDP is able to collect, clean, and consolidate data from multiple sources, creating a single source of truth for an organization. With Protocols, organizations are able to enforce their tracking plan at scale, ensuring that each event collected adheres to their predefined naming conventions. And any event that doesn’t meet these standards is flagged as a violation, which are also displayed in aggregate to help spot trends and root issues.

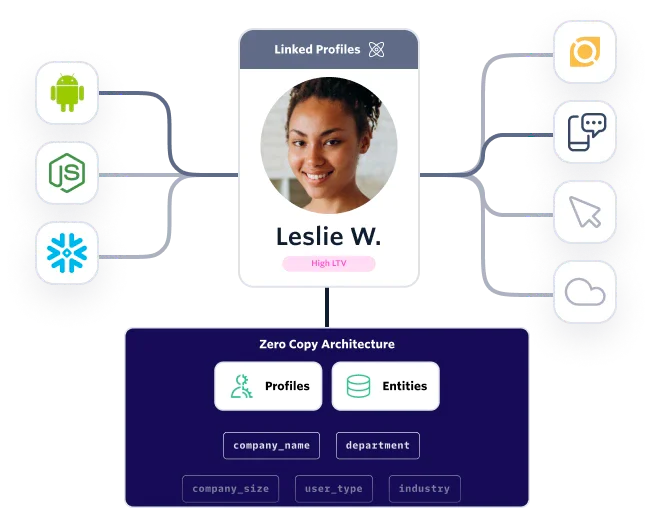

With Unify, organizations are also able to see the complete history of each customer in a single profile, and send those profiles to their data warehouse for further enrichment.

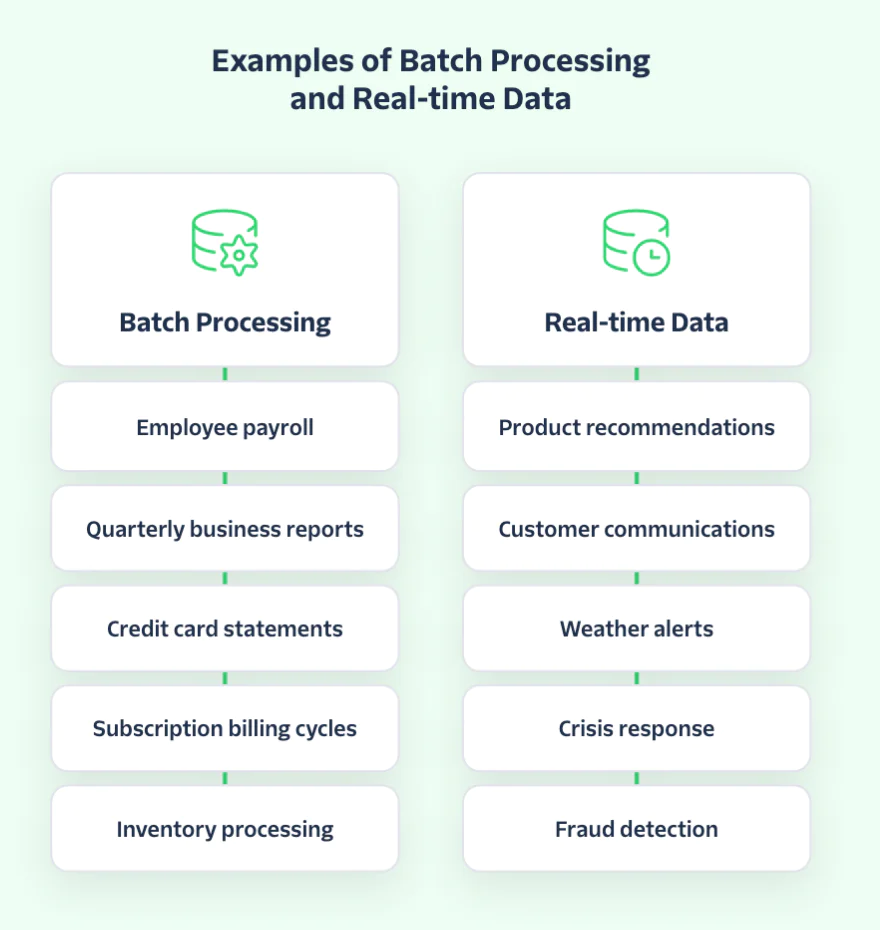

Segment processes 400,000 events per second. Recently, we processed 1.1 trillion API calls in a single month. Real-time data serves several important purposes in today’s day and age – starting with the speed of insight. Think of IoT devices or weather and traffic alerts; all of these things rely on real-time data.

Being able to automate data processing, and know that customer profiles and audiences are updated in real time, is instrumental for businesses. This is how companies can identify churn risks, pinpoint the exact right time to send a discounted offer to a customer, and have the agility to adapt with fast-moving trends.

Take a peek under the hood of Segment’s infrastructure to learn more about how we collect, process, aggregate, and deliver data.

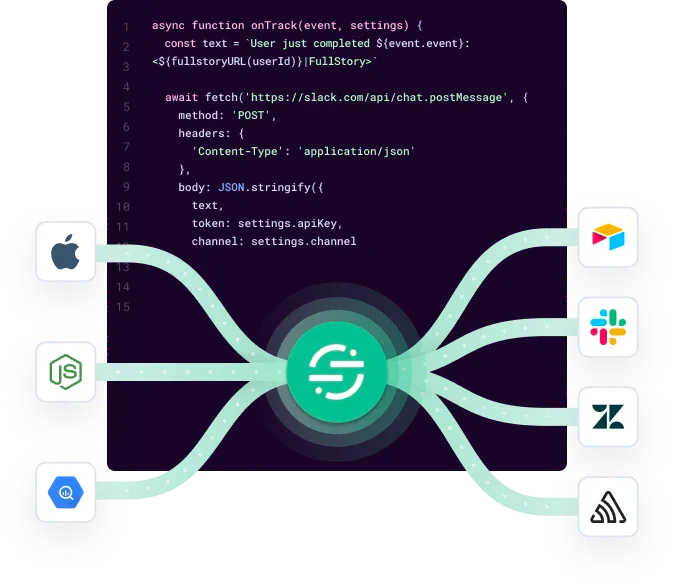

The tools you use today may not be the ones you use tomorrow. Segment offers seamless data integration with Connections, which comes with over 450 pre-built connectors and the ability to create a custom source of destination with a few lines of code.

Artificial intelligence and machine learning are embedded into several Segment features, like Predictive Traits. With Predictive Traits, teams are able to predict the likelihood that a certain user will perform an event, like a propensity to purchase or the likelihood of providing a referral.

Below, we show details on how a prediction for a person’s lifetime value would be created (by selecting a purchase event, revenue property, and the currency).

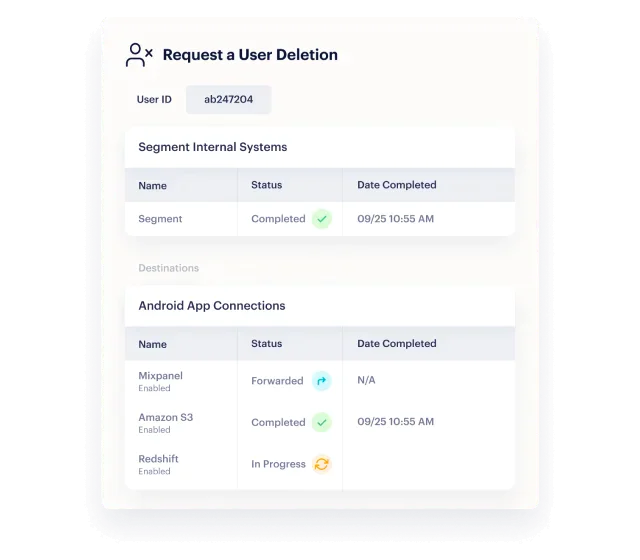

Segment’s CDP is made with the privacy regulations and best practices in mind. When you learn that a customer would like to change their privacy preferences or be removed from your database completely, Segment can automatically perform these actions (i.e., user suppression or deletion).

Segment also automates data governance to protect data privacy at scale, like automatically classifying different data types based on their risk level (e.g., a social security number = high risk, whereas a job title = lower risk).

Segment’s Customer Data Platform (CDP) is an example of an automated data system. It can automate data cleaning, transformation, and the creation of unified customer profiles or audiences. On top of that, it can automatically flag incorrect or inconsistent data, and instantly mask sensitive data to stay compliant with privacy regulations.

The uses for data automation are varied, ranging from ETL processes, to fraud detection, automated reporting, marketing automation, customer journey orchestration, and more.

Data analytics is one of the most popular uses for data automation, with various tools available to help automatically visualize and analyze data (with Power BI or Looker being two noteworthy ones).

Data automation challenges include growing data volumes, maintaining data privacy and security, and ensuring accuracy at scale.